AR Interaction

Panning & Zooming in 3D Dataspaces

In this project, my colleagues and I investigated 3D pan & zoom using mobile devices in augmented reality information spaces. To this end, we designed a series of interaction techniques that combine touch and spatial interaction and compared them in a user study.

The official video at YouTube.

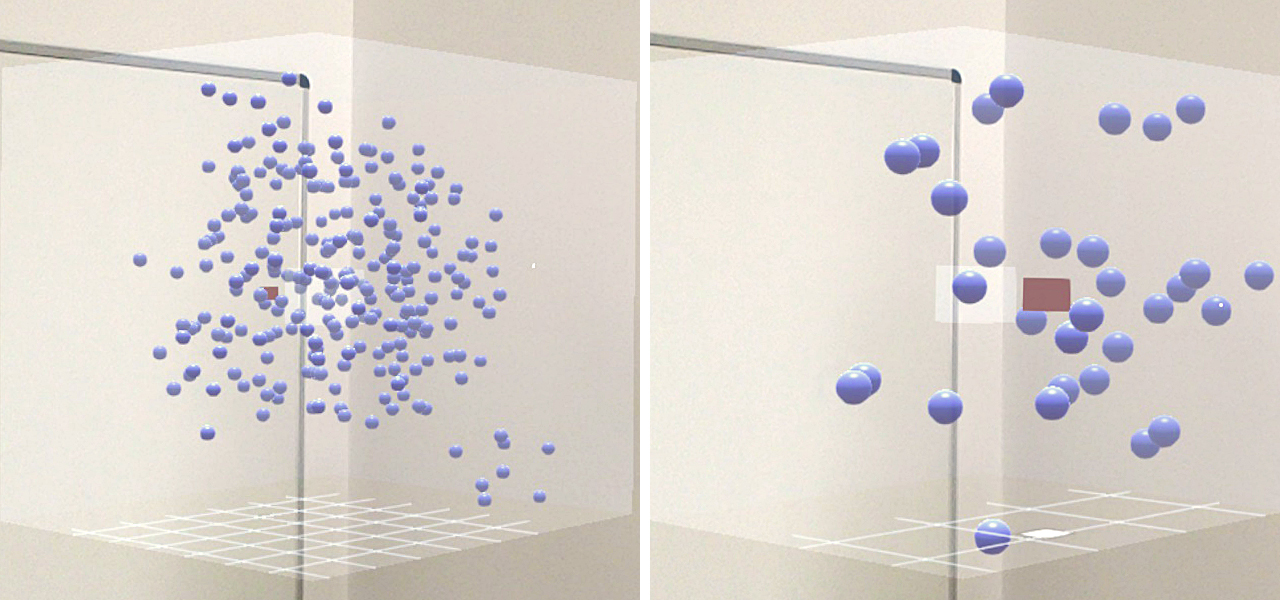

These pictures show the setup of our study prototype: The AR HMD and phone are tracked with an IR tracking system, and an abstract zoomable data space is located in front of the user.

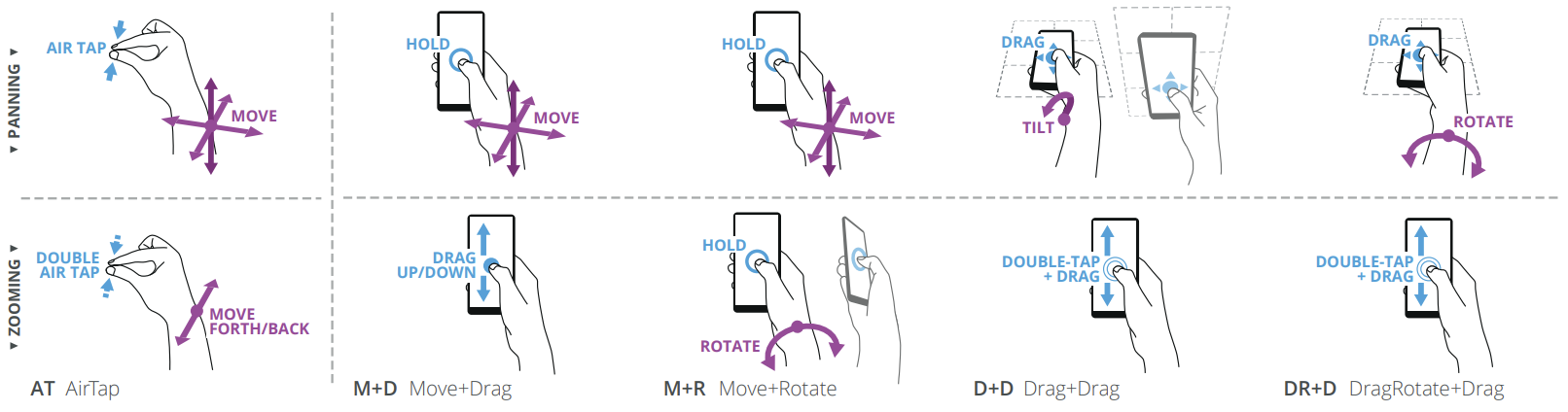

Overview of the different pan & zoom techniques from our study.

For more details about this project, please take a look at the main project website! Additionally, if you would like to cite this work in your research, please cite our MobileHCI ‘19 paper.

References

2019

-

In Proceedings of the 21st International Conference on Human-Computer Interaction with Mobile Devices and Services, Oct 2019

In Proceedings of the 21st International Conference on Human-Computer Interaction with Mobile Devices and Services, Oct 2019